Stereophonic recording creates an illusion of a sound event through reproduction by loudspeakers, as opposed to binaural recording, which attempts this reproduction via headphones.

When a stereophonic recording is heard through a pair of loudspeakers, each ear hears sound from both speakers. This is not the case with headphones. The localization process that takes place in human hearing is different for both kinds of sound reproduction. We will concentrate here on stereophonic recording and playback via loudspeakers. The focus is on the localization of sound sources in the horizontal plane and the spatial impression.

The stereophonic recording and reproduction goes back to Alan Blumlein’s invention.

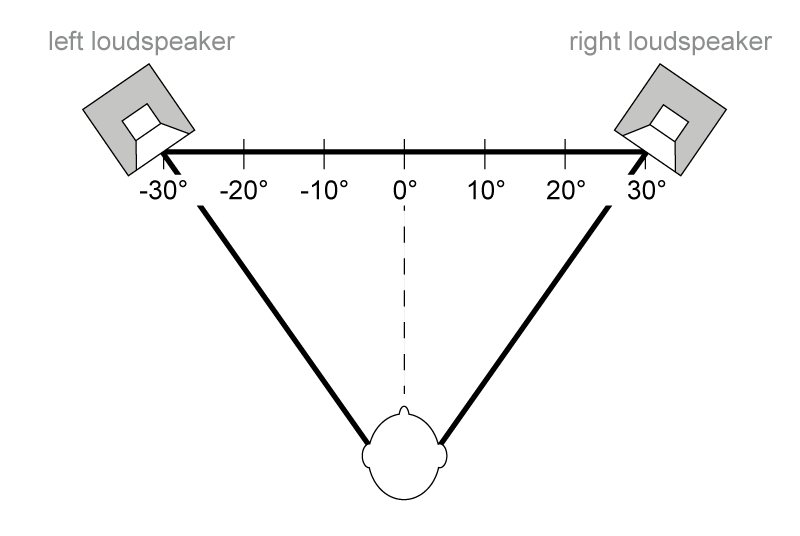

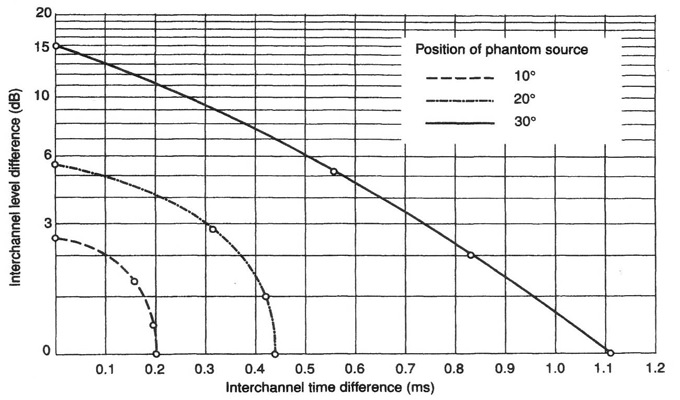

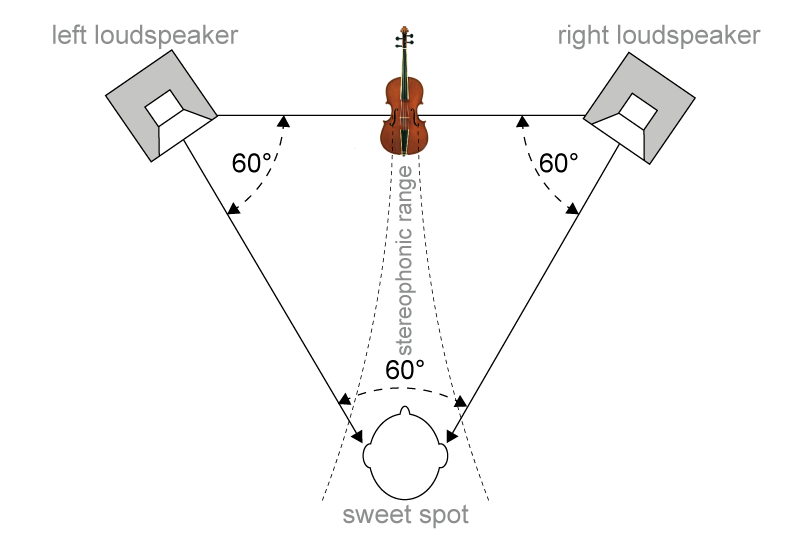

He discovered that a localization of a sound source within a stereo base of two loudspeakers can be controlled by the ratio of the level of the left and right audio signals. This is referred to as intensity stereophony. A balanced image of the sound stage – with phantom sound sources within the stereo base – can be created by an appropriate recording technique (e.g. with a Blumlein pair microphone setup) and by applying an equilateral triangle between the two loudspeakers and the listening position. With intensity stereophony, localization of the loudspeaker positions is excellent, and is still good for positions in between.

It is also possible to place various sound sources via panpots within the stereo base (a process called panning) on the basis of frequency independent level differences. Panning creates space for the different sound sources on the stereo base, so they don’t have to compete acoustically for the same location.

Aside from the ability to determine the direction of a sound event via level differences, human hearing can also determine directions via time differences between the left and right ear. Accordingly, localization can also be controlled by the time delay between the left and right loudspeaker signal. This is called time-of-arrival stereophony. Here a suitable microphone setup is AB (In this case, the spacing of the omnidirectional microphones introduces some phasiness. The mono signal suffers from a comb frequency response that can alter the sound, especially in the low frequency range). Localization is best at the midpoint of the stereo base and becomes more blurred as the position moves closer to either of the loudspeakers, in contrast to pure intensity stereophony where localization is best at the position of the loudspeakers.

If intensity stereophony and time-of-arrival stereophony are combined, this is referred to as mixed sterophony or equivalence stereophony. Here, a sound source never appears in just one channel (only L or only R). The intensity stereophonic component is not fully utilized, but always present to a certain degree.

The localization mechanisms mentioned above are properties of the human auditory system. The ability of human hearing to identify the direction of sound incidence in the frontal horizontal plane was first described in 1907 in John William Strutt’s duplex theory. The level and time differences play key roles when the sound arrives at each ear, the interaural level differences ILD and time differences ITD both working in different frequency ranges.

Because each ear hears sound from both speakers, the differences between one speaker signal and the other to reproduce a sound stage must be different from the signals received by each of the two ears.

The effect of shifting a phantom source by changing the level and time delay difference between the left and right loudspeaker signal also takes place if the listener changes their position. If they move a little to the right, the right speaker signal is louder than the left and it arrives earlier than the left. The phantom source positions within the stereo base will be compressed in a rightward direction. The overall stereo base becomes smaller. Because of these regularities there is only a small listening area with a full and balanced stereo base representation. This is known as the sweet spot.

To recreate a highly realistic image of the original sound event, the professional recording should be accompanied by a set of high-quality speakers for the reproduction, taking into account room acoustics. In such a scenario the loudspeakers are not audible as sources of the sound. Ideal condition for a good localization within the stereo base is a point-source loudspeaker in a room with little reflection, a conditions that often cannot be realized, e.g. in the car. Many loudspeakers are two- or three-way systems. The sound is generated in different speaker chassis depending on frequency, the chassis mounted at a distance from each other. The location of the sound source will therefore be blurred. However, if the listening position is far away enough, the horizontal distance between the speaker-chassis can’t be resolved.

The angular resolution of human hearing, also called minimum audible angle (MAA),[A1] depends on the frequency and direction of the sound, as well as other characteristics of the sound itself. The MAA is best from directly in front and at frequencies below 1 kHz, and is on the order of 2 degrees. This corresponds to a speaker displacement of 7 cm at a distance of 2 m. A 2-degree deviation in an equilateral triangle arrangement corresponds to a 0.9-dB level difference with intensity stereophony.

Stereo recordings try to capture the soundstage width and depth gradation as well as the spatial impression of the recording room. To get a perception of depth, auditory distance cues are important. These include the sound level dependent frequency spectrum of the sound source, the reverberation (or more precisely, the direct-to-reverberant energy ratio), as well as the time difference between arrival of the direct wave and the first strong reflection of a sound source at the listener.

To give the listener a spatial impression of the recording location, information about the recording space is useful. While the ambience can be captured by a suitable microphone arrangement that also picks up the sound behind it, the impression of the recording room is limited because the sound is reproduced only in front of the listener. In addition, the listening room, with its different acoustics, leaves its own spatial footprint. It is therefore clear that the reproduction of a concert hall recording in a typical living room with a 2-channel system sounds less realistic than a studio recording.

To capture a stereo image with localization information and a sense of space, various microphone setups are common. Each setup has its own purpose. As a base, two microphones that create level differences and/or time delay differences, and so can laterally deflect the phantom sound sources on the loudspeaker base, are needed.

The influence of different microphone setups in different environments can be heard by auralization with the „sample player„.

Different stereo recording techniques can be simulated with the „Image Assistant„.

For the purpose of localization of a sound event, human hearing is best supported by level differences between the left and right loudspeaker signals. Time delay differences between the two signals provide additional localization information as well as important information about the recording space. It is therefore useful to take both mechanisms into account during the recording process. As already mentioned, this is referred to as mixed stereophony or equivalence stereophony. Common microphone setups can be found here and here. With directional microphones angled outward, intensity-based imaging is mostly realized for the mid/high-frequency range due to the microphone directional characteristic increasing with frequency. At the same time, the microphone spacing creates a time difference between the two microphone signals for acoustic events that do not come exactly from the center. The level of localization accuracy ranges between intensity stereophony and time-of-arrival stereophony. A microphone spacing, comparable to the interaural spacing, helps to limit the phasiness to the mid and high frequency range. This significantly improves mono compatibility.

For various reasons, mono compatibility is taken into account in the stereo recording process:

- A mixdown from stereo to mono, or a reproduction in mono (e.g. by only one loudspeaker), should not lead to a noticable sound change

- FM stereo transmission uses sum/difference signals instead of left/right. Portable FM stereo receivers and car radios attenuate the difference signal and fade to mono when reception becomes weak.

- Source encoding methods such as mp3, etc., need less data rate to encode signals with a strong center image producing less artifacts with a given data rate.

[A1]T. Letowski, S. Letowski; Localization Error: Accuracy and Precision of Auditory Localization